Facing the Tech Risks of 2025 and Beyond Without Falling Behind

Balancing Innovation with Security in a Digital-First World

Technology moves fast — and in 2025, it’s moving faster than ever. Artificial intelligence, connected devices, remote work platforms, and advanced automation are powering everything from healthcare to education to logistics.

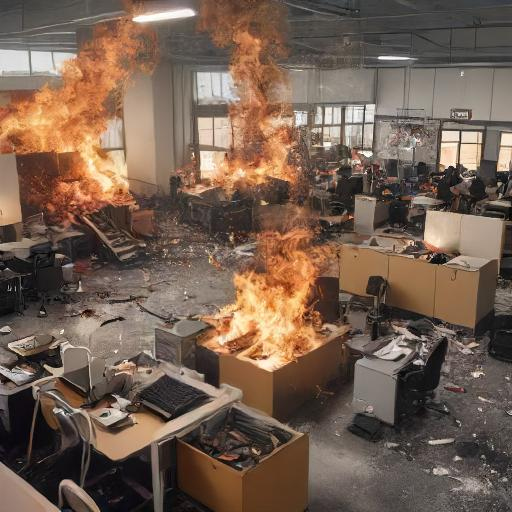

But there’s a sharp edge to this innovation boom. As we adopt smarter systems, we also face smarter risks.

From AI-powered scams to ransomware attacks that anyone can buy off the dark web, tech-driven threats are growing in scale, speed, and sophistication. And if we don’t handle them wisely, they can derail progress, damage trust, and leave people deeply vulnerable.

What Are “Technology-Driven Risks”?

These are risks that emerge because of or through digital technology. Think:

- AI misuse – like deepfake videos or manipulated data;

- Ransomware-as-a-Service – cybercrime you can subscribe to;

- IoT vulnerabilities – when your smart fridge can be hacked;

- Data privacy breaches – exposing personal info at scale;

- Algorithmic bias – where tech decisions reinforce inequality.

They’re not science fiction — they’re happening right now, often behind the scenes.

Why It Matters More Than Ever in 2025

We’ve reached a tipping point where:

The tools of innovation are also tools of exploitation

The same AI that boosts efficiency can be hijacked to spread false information or commit fraud. If we’re not careful, we open the door to harm while trying to do good.

Regulation is catching up — fast

Governments around the world are cracking down on data misuse, AI ethics violations, and cybersecurity lapses. If you’re not compliant, you’re not just behind — you could be legally exposed.

Every connection is a potential risk

The more we digitise — from smart factories to remote classrooms — the more entry points attackers have. The “attack surface” keeps growing, and many organisations are still playing catch-up.

How to Embrace Technology Without Letting It Hurt You

The goal isn’t to stop innovating — it’s to build safety into the process. Here’s how:

1. Think Security From Day One

Don’t wait until you launch to ask, “Is this safe?” Whether you’re rolling out a new app, platform, or AI model, factor in security during the design phase.

2. Make Privacy a Standard, Not a Slogan

Be transparent about what data you collect, why, and how it’s protected. Use tools like encryption and anonymisation to protect user info — even from internal misuse.

3. Educate Everyone, Not Just Tech Teams

Scams and AI deception often rely on human error. Train your people to spot deepfakes, phishing links, and suspicious behavior. Awareness is one of your best defenses.

4. Audit Your Algorithms and AI

If your systems make decisions about people (hiring, benefits, services), check for bias and unintended consequences. Use diverse data and conduct regular audits with accountability in mind.

5. Prepare for Failure — Because It Will Happen

Have clear response plans for data breaches, service interruptions, or tech failures. The faster you contain and recover from an incident, the less damage it causes.

Leading With Confidence in a Digital World

You don’t need to be a technologist to lead securely in 2025. You just need to:

- Stay curious;

- Ask tough questions about risk;

- Insist on transparency;

- Align innovation with ethical standards.

Technology should serve people — not the other way around. With the right balance of boldness and caution, you can harness the full power of digital transformation without compromising your integrity, your data, or your community.